Friday May 22, 2020

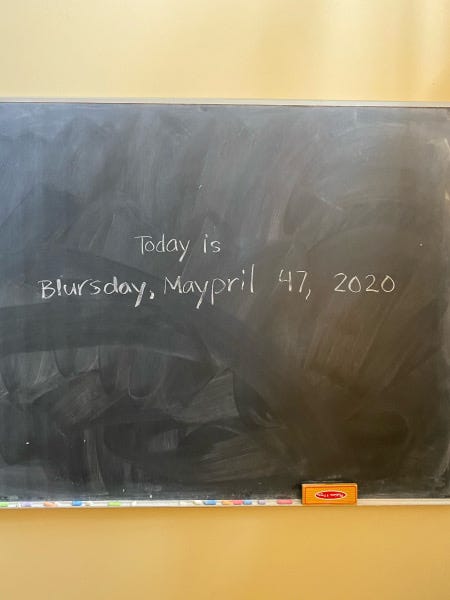

So what day is it, actually?

Seen in a tech company office the other day.

Nearly half of Twitter accounts tweeting about Coronavirus are probably bots

Interesting report from NPR.

Nearly half of the Twitter accounts spreading messages on the social media platform about the coronavirus pandemic are likely bots, researchers at Carnegie Mellon University said Wednesday.

Researchers culled through more than 200 million tweets discussing the virus since January and found that about 45% were sent by accounts that behave more like computerized robots than humans.

It is too early to say conclusively which individuals or groups are behind the bot accounts, but researchers said the tweets appeared aimed at sowing division in America.

This vividly reinforces the message in Phil Howard’s new book — Lie machines: How to Save Democracy from Troll Armies, Deceitful Robots, Junk News Operations and Political Operatives, (Yale, 2020) — which I’m currently reading.

Also it hardly needs saying (does it?) but nobody should think that what happens on Twitter provides a guide to what is actually going on in the real world. It’d be good if more journalists realised that.

Main Street in America: 62 Photos That Show How COVID-19 Changed the Look of Everyday Life

Lovely set of pics from an Esquire magazine project. Still photography reaches parts of the psyche that video can’t touch.

Lots of interesting photographs. Worth a look. But give it time.

Everybody knows…

A reader (to whom much thanks) was struck by my (corrected) reference to Joni Mitchell the other day and sent me a clip from Leonard Cohen’s song, Everybody Knows. This bit in particular strikes home:

Everybody knows that the dice are loaded

Everybody rolls with their fingers crossed

Everybody knows that the war is over

Everybody knows the good guys lost

Everybody knows the fight was fixed

The poor stay poor, the rich get rich

That’s how it goes

Everybody knows

Everybody knows that the boat is leaking

Everybody knows that the captain lied

Everybody got this broken feeling

Like their father or their dog just died

We need power-steering for the mind, not autonomous vehicles

Following on from yesterday’s discussion of humans being treated as ‘moral crumple zones’ for the errors of so-called autonomous systems, there’s an interesting article in today’s New York Times on Ben Schneiderman, a great computer scientist (and an expert on human-computer interaction), who has been campaigning for years to get the more fanatical wing of the AI industry to recognise that what humanity needs is not so much fully-autonomous systems as ones that augment human capabilities.

This is a a debate that goes back at least to the 1960s when the pioneers of networked computing like JCR Licklider and Douglas Engelbart argued that the purpose of computers is to augment human capabilities (provide “power-steering for the mind” is how someone once put it) rather than taking humans out of the loop. What else, for example, is Google search than a memory prosthesis for humanity? In other words an augmentation.

This clash of worldviews comes to a head in many fields now — employment, for example. There’s not much argument, I guess, about building machines to do work that is really dangerous or psychologically damaging. Think of bomb disposal, on the one hand, or mindlessly repetitive tasks that in the end sap the humanity out of workers and are very badly paid. These are areas where, if possible, humans should be taken out of the loop.

But autonomous vehicles — aka self-driving cars — represent a moment where the two mindsets really collide. Lots of corporations (Uber, for instance) can’t wait for the moment when they can dispense with those tiresome human drivers. At the moment, they are frustrated by two categories of obstacle.

The first is a lack (still) of technological competence: the kit still isn’t up to the job of managing the complexity of edge cases — where is where the usefulness of humans as crumple zones comes in, because they act as ‘responsibility sponges’ for corporations.

The second is the colossal infrastructural changes that society would have to make if autonomous vehicles were to become a reality. AI evangelists will say that these changes are orders of magnitude less than the changes that were made in order to accommodate the traditional automobile. But nobody has yet made an estimate of the costs to society of changing the infrastructure of cities to accommodate the technology. And of course these costs will be borne more by taxpayers rather than the corporations who profit from the cost-reductions implicit in not employing drivers. It’ll be the usual scenario: the privatisation of profits, and the socialisation of costs.

Into this debate steps Ben Schneiderman., a University of Maryland computer scientist who has for decades warned against blindly automating tasks with computers. He thinks that the tech industry’s vision of fully-automated cars is misguided and dangerous. Robots should collaborate with humans, he believes, rather than replace them.

Late last year, Dr. Shneiderman embarked on a crusade to convince the artificial intelligence world that it is heading in the wrong direction. In February, he confronted organizers of an industry conference on “Assured Autonomy” in Phoenix, telling them that even the title of their conference was wrong. Instead of trying to create autonomous robots, he said, designers should focus on a new mantra, designing computerized machines that are “reliable, safe and trustworthy.”

There should be the equivalent of a flight data recorder for every robot, Dr. Shneiderman argued.

I can see why the tech industry would like to get rid of human drivers. On balance, roads would be a lot safer. But there is an intermediate stage that is achievable and would greatly improve safety without imposing a lot of the social costs of accommodating fully autonomous vehicles. It’s an evolutionary path involving the steady accumulation of the driver-assist technologies that already exist.

I happen to like driving — at least some kinds of driving, anyway. I’ve been driving since 1971 and have — mercifully — never had a serious accident. But on the other hand, I’ve had a few near-misses where lack of attention on my part, or on the part of another driver, could have had serious consequences.

So what I’d like is far more technology-driven assistance. I’ve found cruise-control very helpful — especially for ensuring that I obey speed-limits. And sensors that ensure that when parking I don’t back into other vehicles. But I’d also like forward-facing radar that, in slow-moving traffic, would detect when I’m too close to a car in front and apply the brakes if necessary — and spot a fox running across the road on a dark rainy night. I’d like lane-assist tech that would spot when I’m wandering on a motorway, and all-round video cameras that would overcome the blind-spots in mirrors and a self-parking system. And so on. All of this kit already exists, and if widely deployed would make driving much safer and more enjoyable. None of it requires the massive breakthroughs that current autonomous systems require. No rocket science required. Just common sense.

The important thing to remember is that this isn’t just about cars, but about AI-powered automation generally. As the NYT piece points out, the choice between elimination or augmentation is going to become even more important when the world’s economies eventually emerge from the devastation of the pandemic and millions who have lost their jobs try to return to work. A growing number of them will find they are competing with or working side by side with machines. And under the combination of neoliberal obsessions about eliminating as much labour as possible, and punch-drunk acceptance of tech visionary narratives, the danger is that societies will plump for elimination, with all the dangers for democracy that that could imply.

A note from your University about its plans for the next semester

Dear Students, Faculty, and Staff —

After careful deliberation, we are pleased to report we can finally announce that we plan to re-open campus this fall. But with limitations. Unless we do not. Depending on guidance, which we have not yet received.

Please know that we eventually will all come together as a school community again. Possibly virtually. Probably on land. Maybe some students will be here? Perhaps the RAs can be let in to feed the lab rats?

We plan to follow the strictest recommended guidance from public health officials, except in any case where it might possibly limit our major athletic programs, which will proceed as usual…

From McSweeney’s